Fog and edge compute, analytics and machine learning

Data that has been transformed from a physical analog event to a digital signal may have actionable consequences. This is where the analytics and rules engines of the IoT come in to play. The level of sophistication for an IoT deployment is dependent on the solution being architected. In some situations, a simple rules engine looking for anomalous temperature extremes can easily be deployed on an edge router monitoring several sensors, like Kamk weather station example. In other situations, a massive amount of structured and unstructured data may be streaming in real time to a cloud-based data lake, and require both fast processing for predictive analytics, and long-range forecasting using advanced machine learning models, such as recurrent neural networks in a time-correlated signal analysis package.

Edge computing

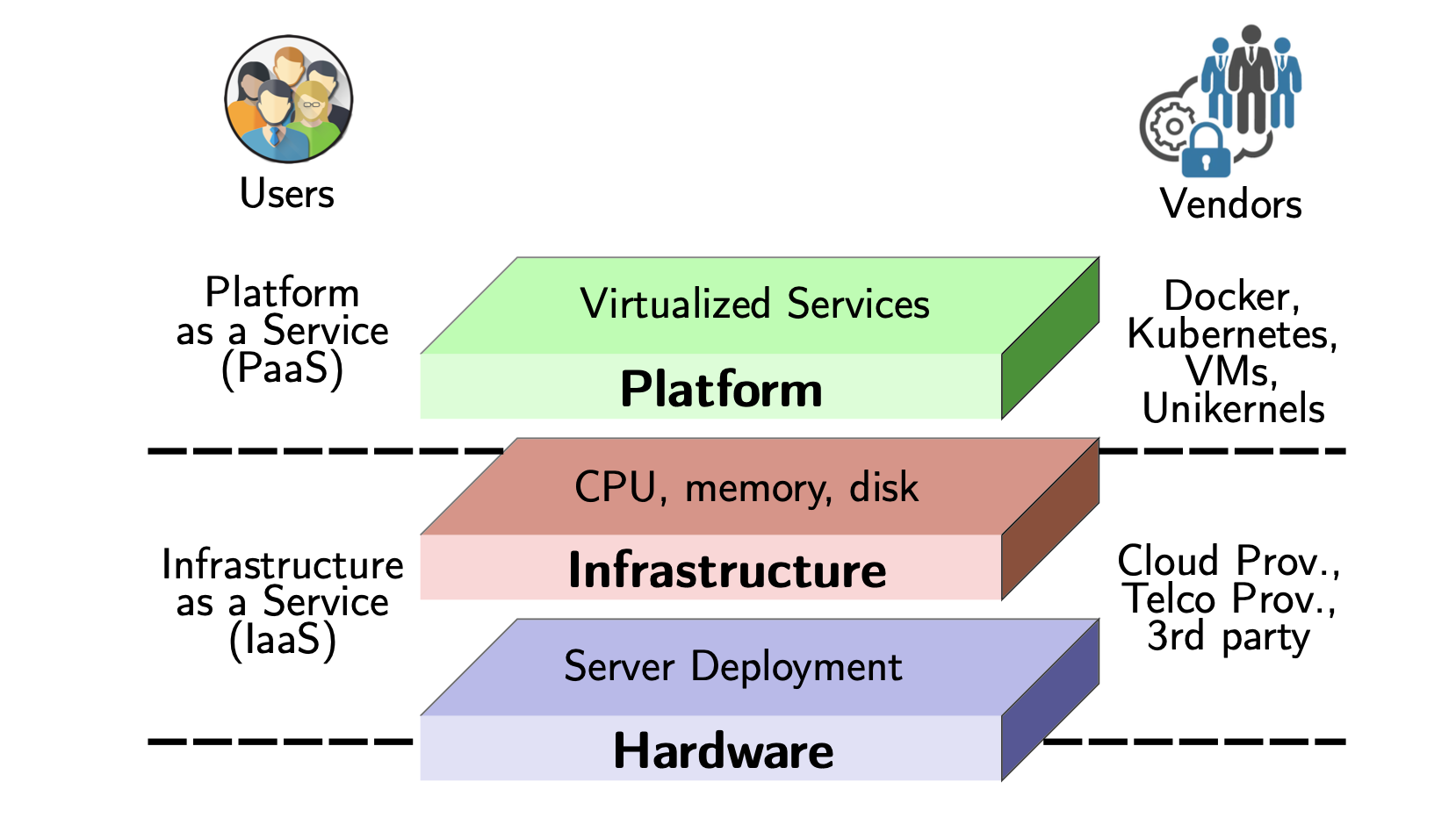

Edge computing is a new cloud paradigm which aims to bring existing cloud services and utilities near end users as we can see Picture 5. Also termed edge clouds, the central objective behind this upcoming cloud platform is to reduce the network load on the cloud by utilizing compute resources in the vicinity of users and IoT sensors. Dense geographical deployment of edge clouds in an area not only allows for optimal operation of delay-sensitive applications but also provides support for mobility, context awareness and data aggregation in computations.

However, the added functionality of edge clouds comes at the cost of incompatibility with existing cloud infrastructure. For example, while data center servers are closely monitored by the cloud providers to ensure reliability and security, edge servers aim to operate in unmanaged publicly-shared environments. Moreover, several edge cloud approaches aim to incorporate crowdsourced compute resources, such as smartphones, desktops, tablets etc., near the location of end users to support stringent latency demands.

Please see the 1 minute video clip powered by Schneider Electric: https://www.youtube.com/watch?v=1vFdnhsI1Nk and this one video clip https://www.youtube.com/watch?v=cEOUeItHDdo&feature=emb_rel_end powered by IBM.

Picture 5. Edge computing and services.

Picture 5. Edge computing and services.

Edge servers

The Edge layer is composed of loosely-coupled compute resources with one-to-two hop network latency from the end users. Because of their close proximity to the user, Edge servers rely on direct wireless connectivity (e.g. short-range WiFi, LTE, 5G, Bluetooth etc.) for communication and coordination with other Edge servers and users alike.

These devices are often of a smaller size with limited computational and storage capacity. Typical examples of Edge servers are smartphones, tablets, desktops, smart speakers etc. The majority of Edge devices participate as crowdsourced servers in Edge-Fog Cloud and are owned and operated by independent third-party entities.

Despite their limited capability, Edge resources are highly dynamic in terms of availability, routing etc., and can collectively address several strict application requirements. The ad-hoc nature of this layer makes it the perfect candidate for handling contextual user requests and support services catering to disaster relief, autonomic vehicle and robotic systems etc.

Enterprise edge computing examples

For enterprises, these industries are examples of those that could benefit from edge computing:

Manufacturing: reducing the amount of data going to the cloud for applications such as predictive maintenance and eventually moving operational technology to generic edge compute platforms to run processes in a cloud-like way, but still maintaining the reliability of an on-premise deployment.

Retail: using edge computing to reduce latency to be able to create rich, interactive experience in stores or at home e.g. using augmented reality for online shopping.

Oil and gas: reducing the reliance on the cloud to enable IoT-based applications.

Enterprise ICT: applications such as virtual desktops could run from the edge to reduce the amount of latency experience when running these from the cloud.

Healthcare: allowing hospitals to innovate and use more cloud-like applications, but still ensuring data is handled in a secure way, rather than in a remote server.