How to use Docker for IoT - Case examples

After your IoT project is up and running, many devices will be producing lots of data. You need an efficient, scalable, affordable way to both manage those devices and handle all that information and make it work for you. When it comes to storing, processing, and analyzing data, especially big data, it's hard to beat the cloud.

This chapter introduces you the world of containers and container orchestration starting from the very basics and providing you the steps needed to proceed with the IoT data from sensor to cloud principles. The Docker Engine itself is not available in this learning environment, but one can install the Docker Desktop on own computer, or use the Play with Docker online learning environment with a mix of hands-on tutorials right in the browser, instructions on setting up and using Docker in your own environment, and resources about best practices for developing and deploying your own applications.

A short history for Docker from Docker.com

The launch of Docker in 2013 jump started a revolution in application development - by democratizing software containers. Docker developed a Linux container technology - one that is portable, flexible and easy to deploy. Docker open sourced libcontainer and partnered with a worldwide community of contributors to further its development. In June 2015, Docker donated the container image specification and runtime code now known as runc, to the Open Container Initiative (OCI) to help establish standardization as the container ecosystem grows and matures. Following this evolution, Docker continues to give back with the containerd project, which Docker donated to the Cloud Native Computing Foundation (CNCF) in 2017. containerd is an industry-standard container runtime that leverages runc and was created with an emphasis on simplicity, robustness and portability. containerd is the core container runtime of the Docker Engine.

Let us think a bit, what a container is. According to Docker.com article - "What is a container?":

A container is a standard unit of software that packages up code and all its dependencies so the application runs quickly and reliably from one computing environment to another. A Docker container image is a lightweight, standalone, executable package of software that includes everything needed to run an application: code, runtime, system tools, system libraries and settings.

Now we can simply take all the buzzwords one by one, and find out what they mean!

Standard unit of software stands for a standardized piece of software, which operates as a base image for our container. It could also point out that Docker containers are the industry standard containers which can be ported and executed in many different platforms.

The lightweight executable package means that Docker containers contain all the necessary pieces of software i.e. operating system, libraries and configuration files to be executed in an isolated environment on the host operating system of the machine the containers run on. The host operating system may be Linux or Windows based and the application runs the same on both operating systems. The Docker containers can be run on the developers own computer and deployed as such in a cloud service.

Docker.com states that:

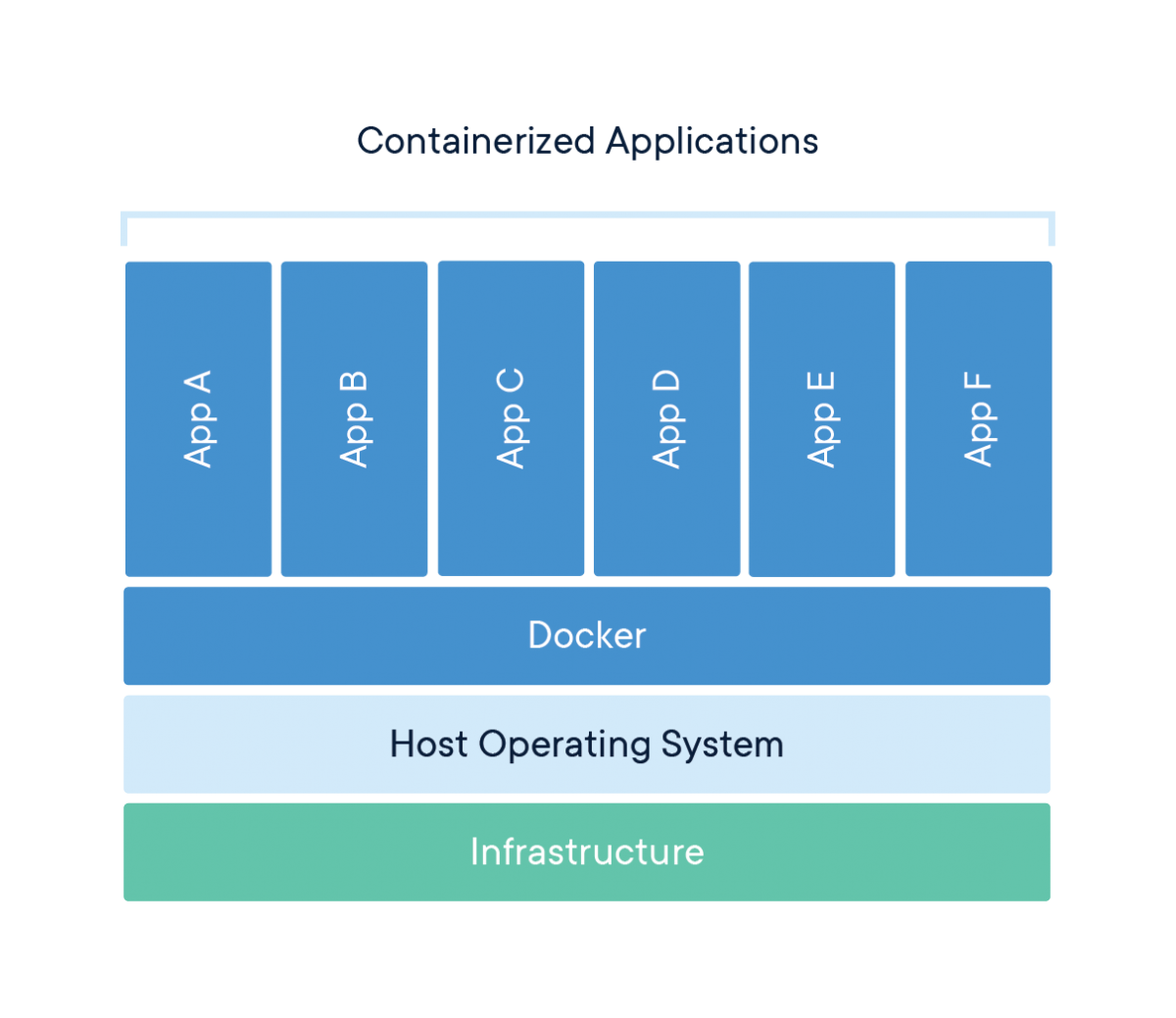

Containers are an abstraction at the app layer that packages code and dependencies together. Multiple containers can run on the same machine and share the OS kernel with other containers, each running as isolated processes in user space. Containers take up less space than VMs (container images are typically tens of MBs in size), can handle more applications and require fewer VMs and Operating systems.

Picture 7. Container architecture.

Picture 7. Container architecture.

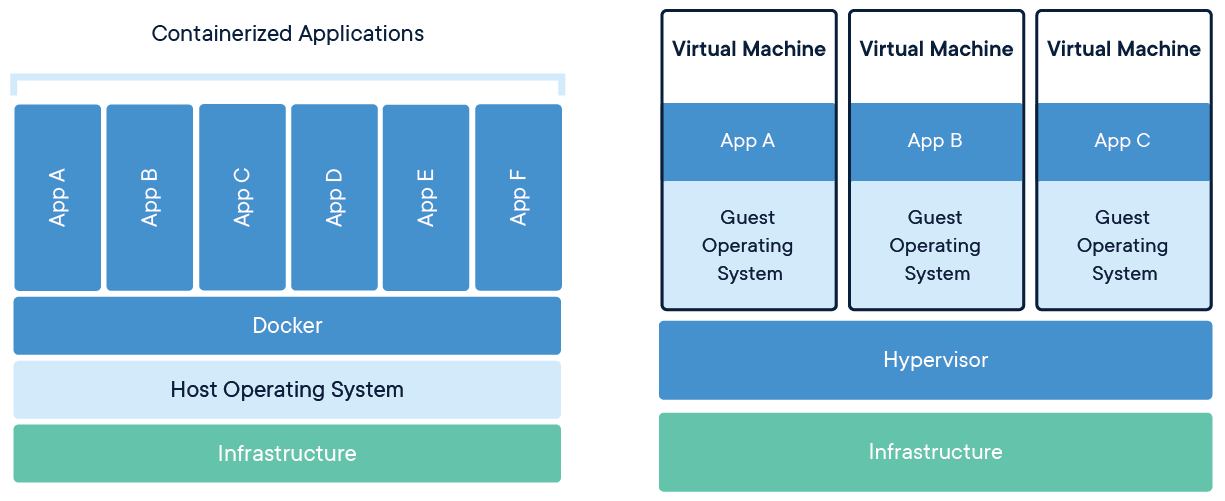

While compared to VM's (Virtual Machines):

Virtual machines (VMs) are an abstraction of physical hardware turning one server into many servers. The hypervisor allows multiple VMs to run on a single machine. Each VM includes a full copy of an operating system, the application, necessary binaries and libraries - taking up tens of GBs. VMs can also be slow to boot.

Picture 8. Containers compared to VMs.

Picture 8. Containers compared to VMs.

In year 2020 Docker is is the industry standard container technology with hosted events like DockerCon 2020 with a grand total of 78,000 signed attendees. The Chief Executive Officer at Docker, Scott Johnston, has some predictions about what 2020 will bring for software development. Three big development trends will likely surface. Gain the upper hand and learn how to go container-first before 2022, when it is expected that more than 75% of global organizations will run containerized apps in 2022.

Simple Ubuntu 18.04 container

So without further due, let us play with the Docker a bit. These examples are run in the Windows 10 Pro operating system using Git for Windows and Docker Desktop version 19.03.8.

Now we want to create a simple Ubuntu Linux container with only the very basic libraries provided in the base image and add the iputils package into the container. We simply define the schema for the contents of the container with Dockerfile. Find the contents for the Dockerfile in the Example #1.

Note! If you're willing to run these commands on your own Windows machine, you may need to install winpty and prefix the docker commands with

winptyor define an alias on the command prompt:alias docker="winpty docker"

Contents for the file named Dockerfile

# This is the content for the Dockerfile: base image + iputils package

# Once the Docker container starts, it starts pinging the localhost 127.0.0.1

FROM ubuntu:18.04

RUN apt update && \

apt install -y iputils-ping

CMD ping 127.0.0.1

# Dockerfile ends here

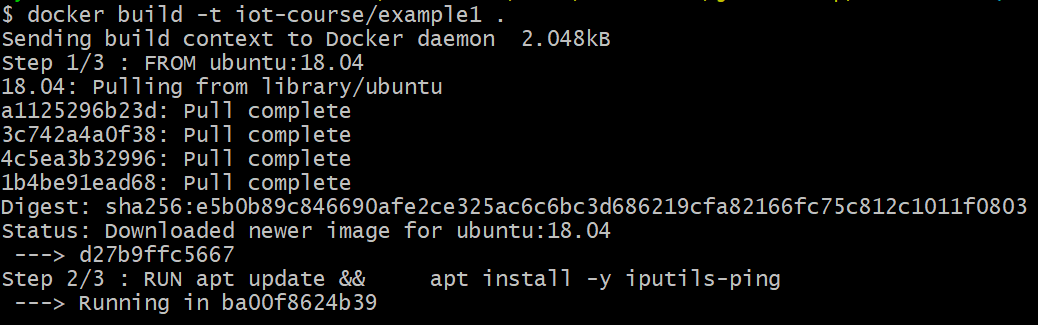

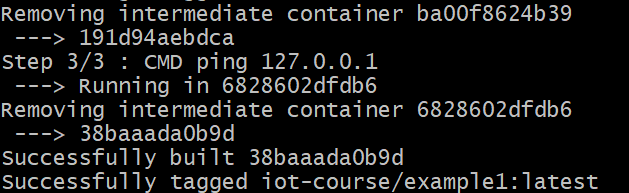

Now you can build your first container with the command docker build -t iot-course/example1 . Please notice the trailing dot at the end of the previous command as it points the working directory for the docker build commands.

Once you run the docker build -t iot-course/example1 . command, the log will be a bit long but at the end you should have your Docker image ready. The FROM command selects the base image of the Docker container. If the image does not exist on your computer, it is automatically downloaded from the Docker Hub. The RUN commands are executed when creating the container. In this particular example we first update the ubuntu software repository with command apt update. Once the repositories are up-to-date, we execute the command apt install -y iputils-ping which downloads and installs the iputils-ping package for us. The installation goes inside the container and does not affect your host computer other than eats a bit of your hard drive:

After this operation, 537 kB of additional disk space will be used. The actual size is a bit more, as the installation procedure also downloads 142 kB of archives (i.e. zipped files) and unpacks them.

additional log for docker build command:

Reading package lists...

Building dependency tree...

Reading state information...

The following additional packages will be installed:

libcap2 libcap2-bin libidn11 libpam-cap

The following NEW packages will be installed:

iputils-ping libcap2 libcap2-bin libidn11 libpam-cap

0 upgraded, 5 newly installed, 0 to remove and 3 not upgraded.

Need to get 142 kB of archives.

After this operation, 537 kB of additional disk space will be used.

Get:1 http://archive.ubuntu.com/ubuntu bionic/main amd64 libcap2 amd64 1:2.25-1.2 [13.0 kB]

Get:2 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libidn11 amd64 1.33-2.1ubuntu1.2 [46.6 kB]

Get:3 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 iputils-ping amd64 3:20161105-1ubuntu3 [54.2 kB]

Get:4 http://archive.ubuntu.com/ubuntu bionic/main amd64 libcap2-bin amd64 1:2.25-1.2 [20.6 kB]

Get:5 http://archive.ubuntu.com/ubuntu bionic/main amd64 libpam-cap amd64 1:2.25-1.2 [7268 B]

Fetched 142 kB in 2s (79.4 kB/s)

Now we have the container image ready to be executed with docker run command. Once started, the container starts pinging itself (localhost / ip: 127.0.0.1) until the operation is interrupted with CTRL-C buttons.

The specified Docker container iot-course/example1 is started within the Docker Engine. The CMD follows the Dockerfile specification, and the ping 127.0.0.1 is the command executed inside the container.

CMD ping 127.0.0.1

Now this is all that is needed to build and run a simple container, once the Docker Engine is running on the host computer. Visit Docker Engine documentation more detailed information. Now let us continue with a bit more IoT specific topic: running Python temperature & humidity measurement script on a Raspberry Pi!

Temperature & Humidity measurement

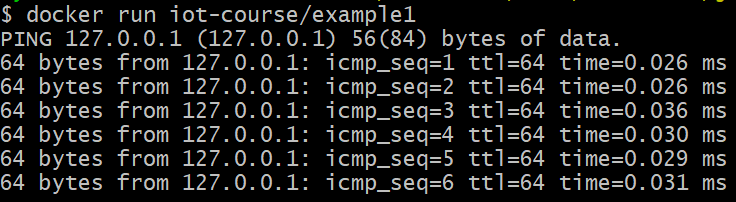

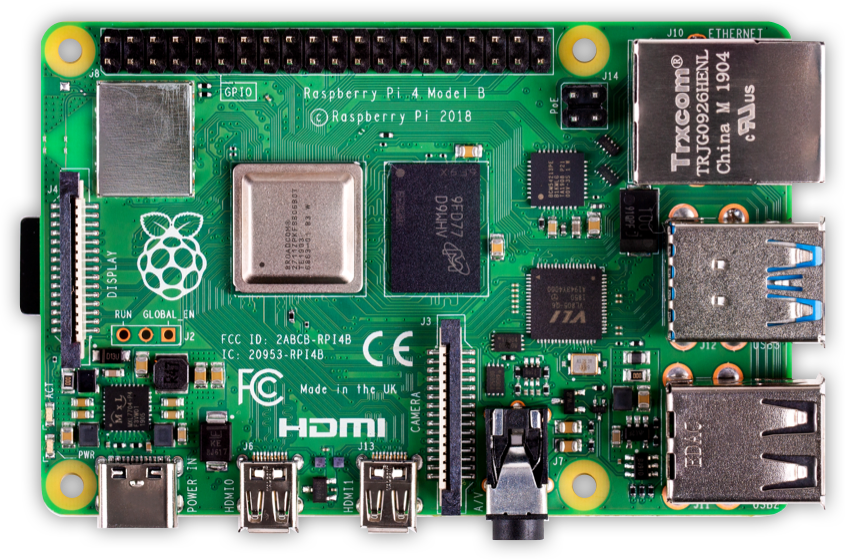

This example covers the temperature and humidity measurement on a ARM-based Raspberry Pi card computer using a DHT22 sensor module (the module includes the 10k pull-up resistor on board). The Raspberry 4 and the DHT22 are shown in the pictures below.

Picture 9. Raspberry Pi 4.

Picture 9. Raspberry Pi 4.

Picture 10. DHT-22 sensor.

Picture 10. DHT-22 sensor.

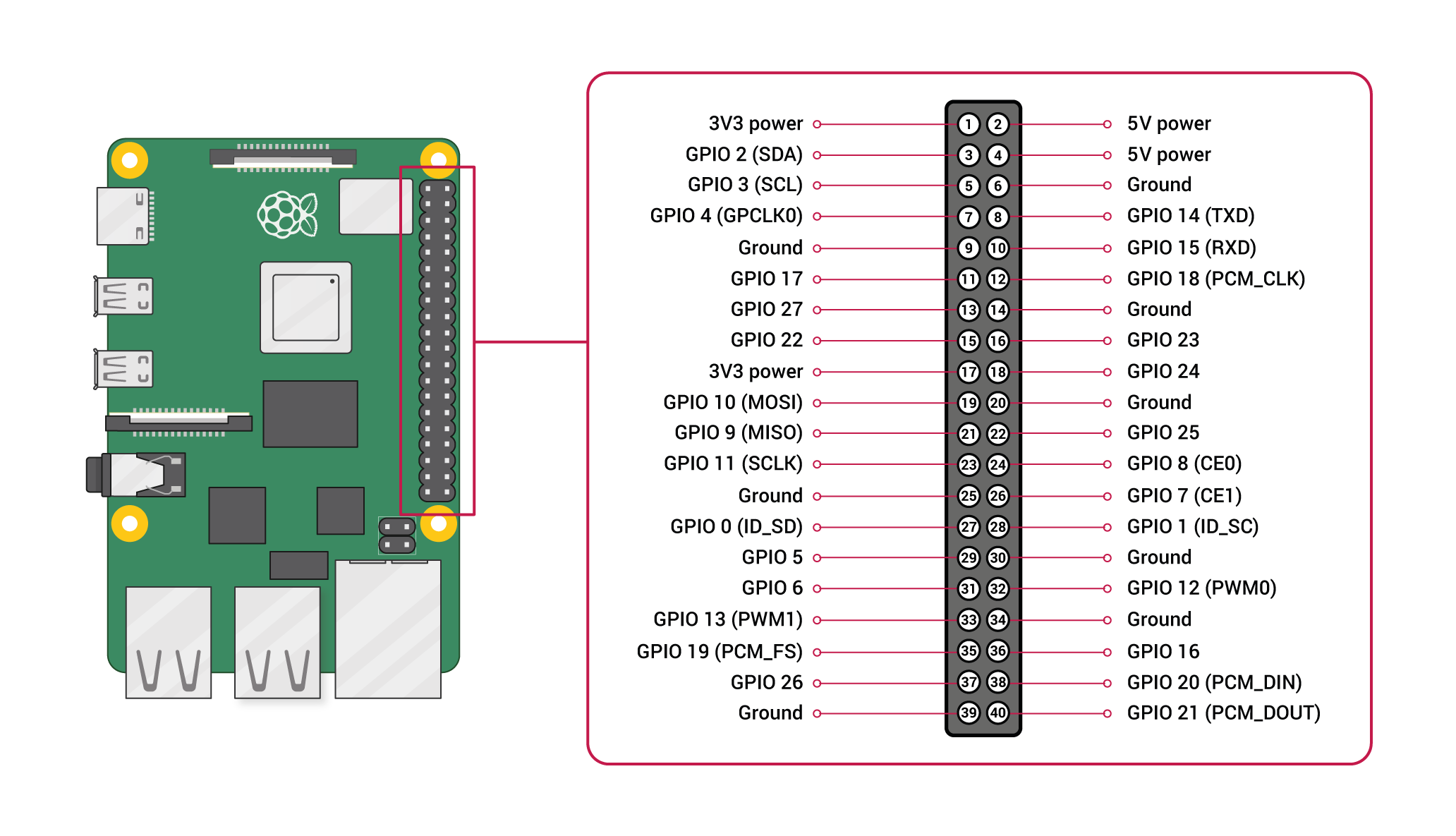

A similar setup is available in this Youtube video. The video includes a breadboard to connect the DHT22 sensor and the pull-up resistor to Raspberry Pi. In our example, the DHT22 sensor module is connected to GPIO 17 and 18 (i.e. connector pins 11 and 12). The Raspberry Pi GPIO layout can be found from the https://www.raspberrypi.org/documentation/usage/gpio/.

Picture 11. Raspberry Pi GPIO layout.

Picture 11. Raspberry Pi GPIO layout.

The main purpose is to Dockerize our Python application, so let's get started with that. We sure need a Dockerfile to define the contents of the container. It should look like this.

Dockerfile

FROM ubuntu:18.04

COPY . /app

WORKDIR /app

# Get the latest software from the repositories

RUN apt update

# Install python3 environment

RUN apt install -y python3-pip python3

# Install Adafruit library

RUN pip3 install Adafruit_DHT

# Run read_sensors.py script

ENTRYPOINT ["python"]

CMD ["read_sensors.py"]

The steps needed to produce the Docker container are explained above. The ENTRYPOINT points to the Python interpreter and CMD provides the parameter(s) to the ENTRYPOINT command.

We build the container with command docker build -t iot-course/example2 . and we can then run the container on a Raspberry Pi 4 with the command docker run --privileged iot-course/example2

pi@cluster2:~/code/dwbl $ docker run --privileged iot-course/example2

(36.20000076293945, 24.899999618530273)

(34.70000076293945, 25.200000762939453)

This prints out the humidity (first value) and the temperature (second value) of the both sensors. The --privileged parameter is needed to allow hardware access to the Docker container. In some cases it may be also needed to mount the /dev/mem directory of the host operating system to be accessible inside the container.

Docker Example Three: Publish measurements to internet

Now we are able to interface the DHT-22 temperature sensors with our Raspberry Pi, so let us make the measured values available to internet. The Rasbperry Pi should have enough power to run a web server. This can be done with the Flask library, as shown in the previous chapter. We need only a little tweak to our Dockerfile. Let us rename the Dockerfile to Dockerfile-web.

Dockerfile-web

FROM ubuntu:18.04

COPY . /app

WORKDIR /app

# Get the latest software from the repositories

RUN apt update

# Install python3 environment

RUN apt install -y python3-pip python3

# Install Adafruit library

RUN pip3 install Adafruit_DHT

# Expose container port 5000

EXPOSE 5000

# Run read_sensors.py script

ENTRYPOINT ["python"]

CMD ["webserver.py"]

Now we can rebuild the Docker image docker build -f Dockerfile-web -t iot-course/example3 ..

pi@cluster2:~/code/dwbl $ docker build -f Dockerfile -t iot-course/example3 .

Sending build context to Docker daemon 5.12kB

Step 1/9 : FROM ubuntu:18.04

---> cd3a27be997b

Step 2/9 : RUN apt-get update -y

---> Using cache

---> 1518c1250bc9

Step 3/9 : RUN apt-get install -y python-pip python3

---> Using cache

---> 5deefee922bb

Step 4/9 : RUN pip install flask Adafruit_DHT

---> Using cache

---> e72fa71ed9d3

Step 5/9 : COPY . /app

---> 63e013ca7850

Step 6/9 : WORKDIR /app

---> Running in 7d24d0b81594

Removing intermediate container 7d24d0b81594

---> bcd3caf9b981

Step 7/9 : EXPOSE 5000

---> Running in ea8608124ed0

Removing intermediate container ea8608124ed0

---> c4b3bf50ab38

Step 8/9 : ENTRYPOINT ["python"]

---> Running in 5995adb1f3d9

Removing intermediate container 5995adb1f3d9

---> e8343c21b1bd

Step 9/9 : CMD ["webserver.py"]

---> Running in 277e4e51baca

Removing intermediate container 277e4e51baca

---> 2d5cc3d9e12b

Successfully built 2d5cc3d9e12b

Successfully tagged iot-course/example3:latest

And finally run the container with command docker run -p 8080:5000 --privileged iot-course/example3.

pi@cluster2:~/code/dwbl $ docker run -p 8080:5000 --privileged iot-course/example3

* Serving Flask app "webserver" (lazy loading)

* Environment: production

WARNING: This is a development server. Do not use it in a production deployment.

Use a production WSGI server instead.

* Debug mode: on

* Running on http://0.0.0.0:5000/ (Press CTRL+C to quit)

Now by accessing the https://dwbl.dclabra.fi/api/v1/dht1 we get the humidity and temperature from the first sensor, and https://dwbl.dclabra.fi/api/v1/dht2 for the second sensor. The Raspberry Pi 4 would have enough power to operate as an edge node and to store/buffer temperature values, but in this example we just wanted to publish the measured humidity and temperature values to the public.

Mission accomplished!

Storing measurement data to InfluxDB database

For this experiment, we need to build a simple script that reads the sensor values from the REST API provided above and stores the values into InfluxDB time-series database. As the data is read over the internet, we can execute these two containers (datalogger application & influxdb database) on any computer that is connected to internet and running the Docker Engine e.g. on personal computer or on a virtual machine running in IaaS or PaaS cloud.

Let's start with the datalogger application, which can be implemented as a simple Python script.

datalogger.py:

from influxdb import InfluxDBClient

import time

import requests

def main(host='influxdb', port=8086):

"""Instantiate a connection to the InfluxDB."""

user = 'admin'

password = 'insert-password-here'

dbname = 'sensorhub'

dbuser = 'sensorhub'

dbuser_password = 'insert-password-here'

client = InfluxDBClient(host, port, user, password, dbname)

print("Switch user: " + dbuser)

client.switch_user(dbuser, dbuser_password)

counter = 0;

while True:

sensor_id = ((counter % 2)+1)

counter = counter+1

print(sensor_id)

resp = requests.get('https://dwbl.dclabra.fi/api/v1/dht'+str(sensor_id)).json()

json_body = [

{

"measurement": "temp_hum",

"tags": {

"host": "dwbl.dclabra.fi",

"region": "fi-east",

"sensor_id": resp["sensor_id"],

"sensor_type": resp["sensor_type"],

},

"time": int(time.time() * 1000),

"fields": {

"humidity": resp["humidity"],

"temperature": resp["temperature"]

}

}

]

print("Write points: {0}".format(json_body))

client.write_points(json_body, database=dbname, time_precision='ms', batch_size=10000, protocol='json')

time.sleep(1)

if __name__ == '__main__':

main('influxdb', 8086)

Take some time to go through the Python script and try to understand it in detail. The next step is to containerize the datalogger application, which means that we need another Dockerfile manuscript. Let's name it 'Dockerfile-datalogger`.

Dockerfile-datalogger:

# Switched base image to ready-made python image

FROM python:latest

COPY . /app

WORKDIR /app

RUN pip3 install -r requirements.txt

EXPOSE 8080

CMD ["python3", "datalogger.py"]

Contents of the additional requirements.txt file are shown below.

requirements.txt:

influxdb

requests

It simply lists the additional Python libraries needed to install for the application.

Everything looks somewhat similar to previous Dockerfiles so far, except that we use the python:latest base image, which is already configured to support Python 3 interpreter. Let's proceed to the next step, installation of the InfluxDB database, which can also be run in a container. But now we have two containers, that should be linked together. No worries, because Docker has multiple alternatives to solve this issue. We can build and run separate containers and link them together, or we can use the Docker Compose to define a multi-container setup in a docker-compose.yml file. Let us try the Docker Compose alternative. Take a moment to review the file contents below:

docker-compose.yml:

version: "3"

services:

influxdb:

image: influxdb

container_name: influxdb

volumes:

- vol-sensorhub-data:/var/lib/influxdb

environment:

- INFLUXDB_DB=sensorhub

- INFLUXDB_ADMIN_ENABLED=true

- INFLUXDB_ADMIN_USER=admin

- INFLUXDB_ADMIN_USER=sensorhub

- INFLUXDB_USER_PASSWORD=insert-password-here

- INFLUXDB_ADMIN_PASSWORD=insert-password-here

networks:

- sensorhub

datalogger:

build: ./datalogger

networks:

- sensorhub

depends_on:

- influxdb

networks:

sensorhub:

volumes:

vol-sensorhub-data:

Wow that is amazing! There are two services: influxdb and datalogger, which both have their own definitions and configurations. The influxdb service uses the premade image defined in image: influxdb, which is available in the Docker Hub. The datalogger will be build from the source codes provided in the previous example.

The influxdb database is persisted by defining a Docker volume:

volumes:

vol-sensorhub-data:

and using the volume in the influxdb service:

volumes:

- vol-sensorhub-data:/var/lib/influxd

Now we need to run the docker-compose up --build command for Docker Compose to start building the environment. The --build parameter makes sure that the services built from source codes are always re-built. If you haven't changed the source codes, feel free to remove the --build parameter. It takes a while to build both the services, but once the build is complete, they are also executed.

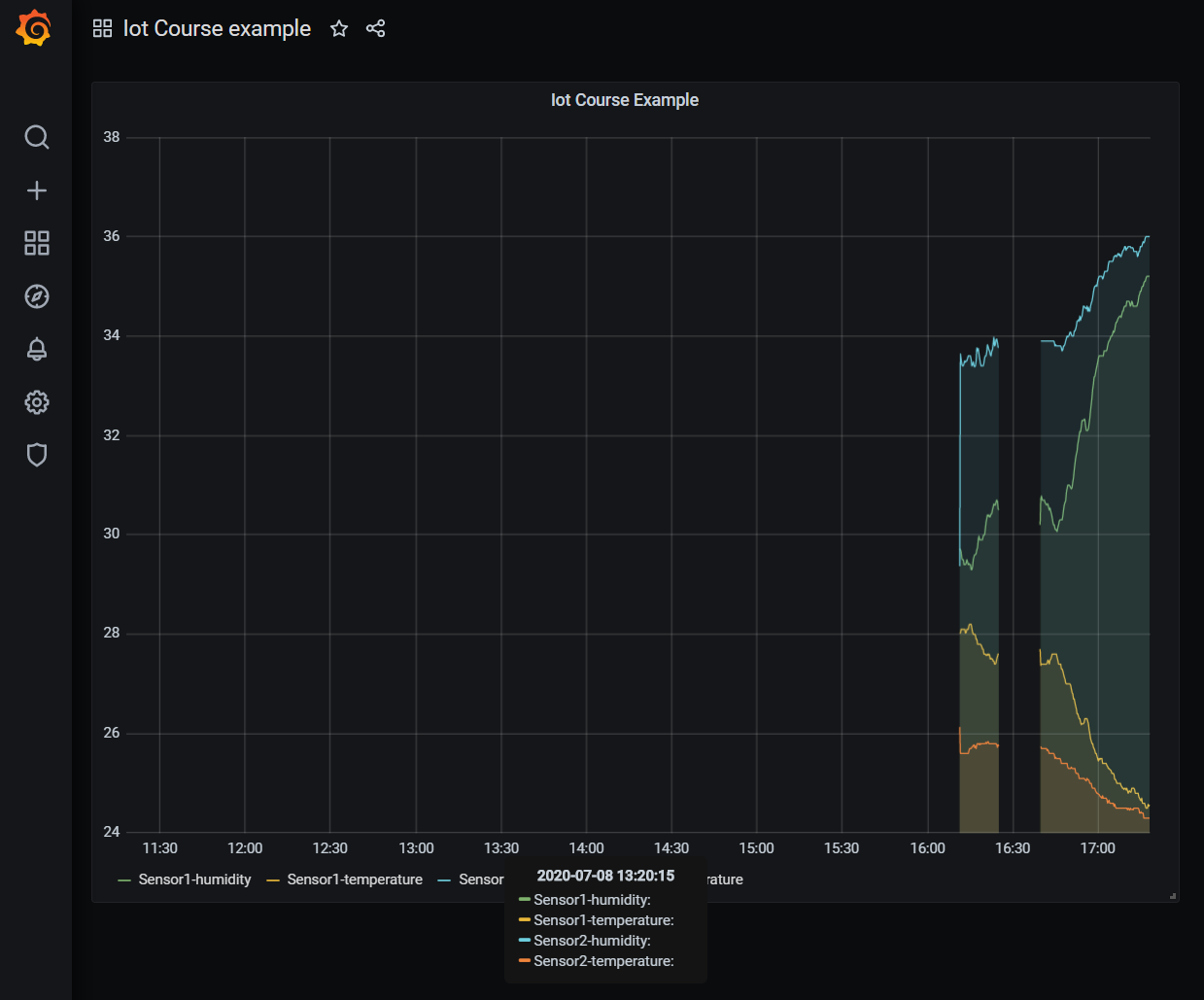

Dashboard with Grafana

The last step is to produce a nice visualization of the measurement data. In this example we include the Grafana dashboard and import a influxdb datasource into it. The Grafana dashboard will also be executed in a Docker container and added to the docker-compose.yml file.

docker-compose.yml:

version: "3"

services:

influxdb:

image: influxdb

container_name: influxdb

volumes:

- vol-sensorhub-data:/var/lib/influxdb

environment:

- INFLUXDB_DB=sensorhub

- INFLUXDB_ADMIN_ENABLED=true

- INFLUXDB_ADMIN_USER=admin

- INFLUXDB_ADMIN_USER=sensorhub

- INFLUXDB_USER_PASSWORD=insert-password-here

- INFLUXDB_ADMIN_PASSWORD=insert-password-here

networks:

- sensorhub

grafana:

image: grafana/grafana:latest

container_name: grafana

ports:

- 8888:3000

volumes:

- vol-grafana-data:/var/lib/grafana

environment:

- GF_SECURITY_ADMIN_PASSWORD=insert-password-here

- GF_SERVER_ROOT_URL=http://grafana

networks:

- sensorhub

datalogger:

build: ./datalogger

networks:

- sensorhub

depends_on:

- influxdb

networks:

sensorhub:

volumes:

vol-sensorhub-data:

vol-grafana-data:

Simple as that! We did add another persistent volume for Grafana. Now we have all the three containers up and running and we can continue configuring the Grafana dashboard. The solution for this example is seen on a video in Youtube. Take a moment to go through it.

Picture 12. Grafana dashboard.

Picture 12. Grafana dashboard.

Now that everything is dockerized, you can run your datalogger, grafana and influxdb services on separate cpu-nodes if willing!

And that's all for this chapter.